this post was submitted on 05 Feb 2024

667 points (87.9% liked)

Memes

45660 readers

1501 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

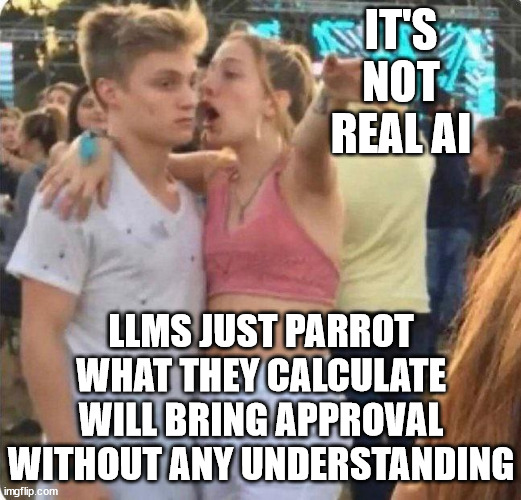

The reason it's dangerous is because there are a significant number of jobs and people out there that do exactly that. Which can be replaced....

This post isn't true, LLMs do have an understanding of things.

SELF-RAG: Improving the Factual Accuracy of Large Language Models through Self-Reflection

Chess-GPT's Internal World Model

POKÉLLMON: A Human-Parity Agent for Pokémon Battle with Large Language Models

Language Models Represent Space and Time

Whilst everything you linked is great research which demonstrates the vast capabilities of LLMs, none of it demonstrates understanding as most humans know it.

This argument always boils down to one's definition of the word "understanding". For me that word implies a degree of consciousness, for others, apparently not.

To quote GPT-4:

You are moving goal posts

"understanding" can be given only when you reach like old age as a human and if you meditated in a cave

That's my definition for it

No one is moving goalposts, there is just a deeper meaning behind the word "understanding" than perhaps you recognise.

The concept of understanding is poorly defined which is where the confusion arises, but it is definitely not a direct synonym for pattern matching.