this post was submitted on 13 Sep 2023

1508 points (98.9% liked)

Memes

47008 readers

801 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

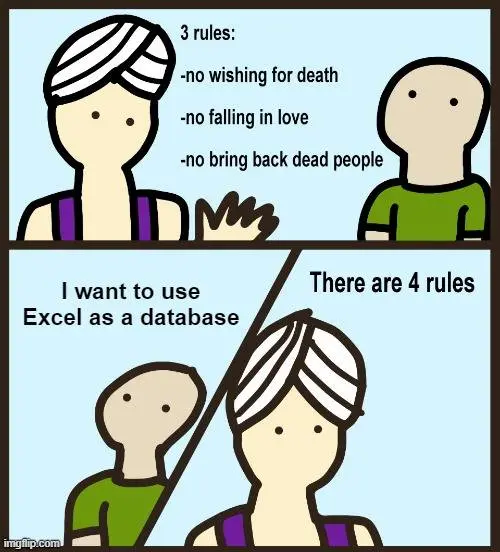

It can handle more than a million rows so why not?

In my experience it doesn't work well when you have more than a couple people editing the file. My company had a group of ten modifying the same file in live time; it led to huge desync problems.

I live in Excel hell and even that made me shudder. Just work on separate files and have a master spreadsheet append everything with power query.

I made a similar reply higher up and I fucking hate that that's a solution but it legitimately would work in this use case. I frequently deal with 1M+ row data sets and our API can only export like 20k rows at a time so I have a script make the pulls into a folder and I just PQ to append the whole fucking folder into one data set. You don't even have to load the table at that point, you can pull as-is from the data model to BI or make a pivot or whatever else you're trying to do with that much data.

Parent company doesn't want ANYONE to have direct read access to the database - only the scant few heavily formatted reports the user-facing software will allow. Data analysis still needs to get done though, so.....

Yeah. PQ -> Data Model saves my ass and my co-workers think I'm a wizard.

That, and learning how to quietly exploit minor vulnerabilities in the software to get raw tables I "shouldn't" have and telling not one soul has been a winning combo!