Iiinteresting. I'm on the larger AB350-Gaming 3 and it's got REV: 1.0 printed on it. No problems with the 5950X so far. 🤐 Either sheer luck or there could have been updated units before they officially changed the rev marking.

lightrush

On paper it should support it. I'm assuming it's the ASRock AB350M. With a certain BIOS version of course. What's wrong with it?

B350 isn’t a very fast chipset to begin with

For sure.

I’m willing to bet the CPU in such a motherboard isn’t exactly current-gen either.

Reasonable bet, but it's a Ryzen 9 5950X with 64GB of RAM. I'm pretty proud of how far I've managed to stretch this board. 😆 At this point I'm waiting for blown caps, but the case temp is pretty low so it may end up trucking along for surprisingly long time.

Are you sure you’re even running at PCIe 3.0 speeds too?

So given the CPU, it should be PCIe 3.0, but that doesn't remove any of the queues/scheduling suspicions for the chipset.

I'm now replicating data out of this pool and the read load looks perfectly balanced. Bandwidth's fine too. I think I have no choice but to benchmark the disks individually outside of ZFS once I'm done with this operation in order to figure out whether any show problems. If not, they'll go in the spares bin.

I put the low IOPS disk in a good USB 3 enclosure, hooked to an on-CPU USB controller. Now things are flipped:

capacity operations bandwidth

pool alloc free read write read write

------------------------------------ ----- ----- ----- ----- ----- -----

storage-volume-backup 12.6T 3.74T 0 563 0 293M

mirror-0 12.6T 3.74T 0 563 0 293M

wwn-0x5000c500e8736faf - - 0 406 0 146M

wwn-0x5000c500e8737337 - - 0 156 0 146M

You might be right about the link problem.

Looking at the B350 diagram, the whole chipset is hooked via PCIe 3.0 x4 link to the CPU. The other pool (the source) is hooked via USB controller on the chipset. The SATA controller is also on the chipset so it also shares the chipset-CPU link. I'm pretty sure I'm also using all the PCIe links the chipset provides for SSDs. So that's 4GB/s total for the whole chipset. Now I'm probably not saturating the whole link, in this particular workload, but perhaps there's might be another related bottleneck.

Turns out the on-CPU SATA controller isn't available when the NVMe slot is used. 🫢 Swapped SATA ports, no diff. Put the low IOPS disk in a good USB 3 enclosure, hooked to an on-CPU USB controller. Now things are flipped:

capacity operations bandwidth

pool alloc free read write read write

------------------------------------ ----- ----- ----- ----- ----- -----

storage-volume-backup 12.6T 3.74T 0 563 0 293M

mirror-0 12.6T 3.74T 0 563 0 293M

wwn-0x5000c500e8736faf - - 0 406 0 146M

wwn-0x5000c500e8737337 - - 0 156 0 146M

Interesting. SMART looks pristine on both drives. Brand new drives - Exos X22. Doesn't mean there isn't an impending problem of course. I might try shuffling the links to see if that changes the behaviour on the suggestions of the other comment. Both are currently hooked to an AMD B350 chipset SATA controller. There are two ports that should be hooked to the on-CPU SATA controller. I imagine the two SATA controllers don't share bandwidth. I'll try putting one disk on the on-CPU controller.

- Lenovo ThinkCentre / Dell OptiPlex USFF machine like the M710q.

- Secondary NVMe or SATA SSD for a RAID1 mirror

- Use LVMRAID for this. It uses mdraid underneath but it's easier to manage

- External USB disks for storage

- WD Elements generally work well when well ventilated

- OWC Mercury Elite Pro Quad has a very well implemented USB path and has been problem-free in my testing

- Debian / Ubuntu LTS

- ZFS for the disk storage

- Backups may require a second copy or similar of this setup so keep that in mind when thinking about the storage space and cost

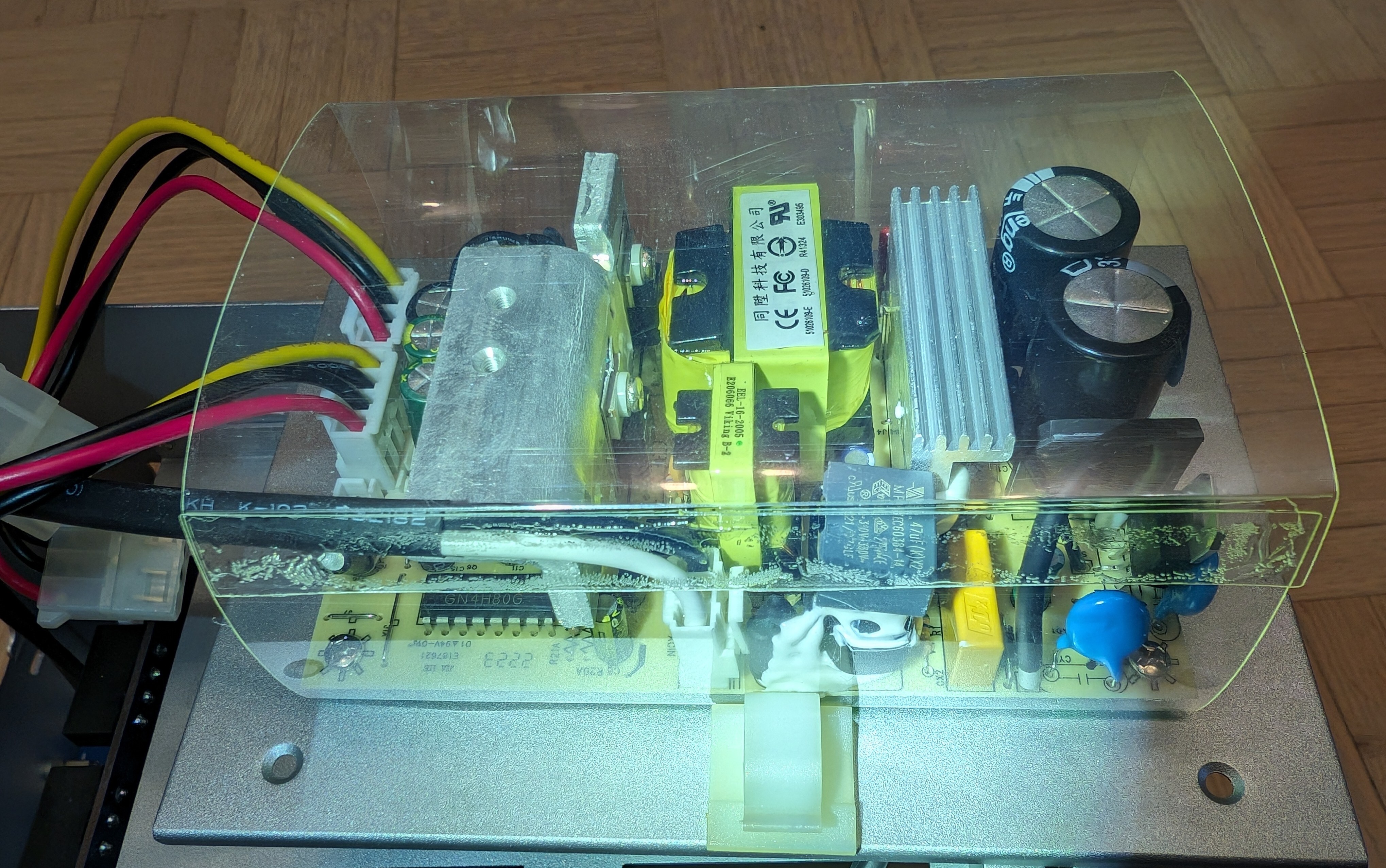

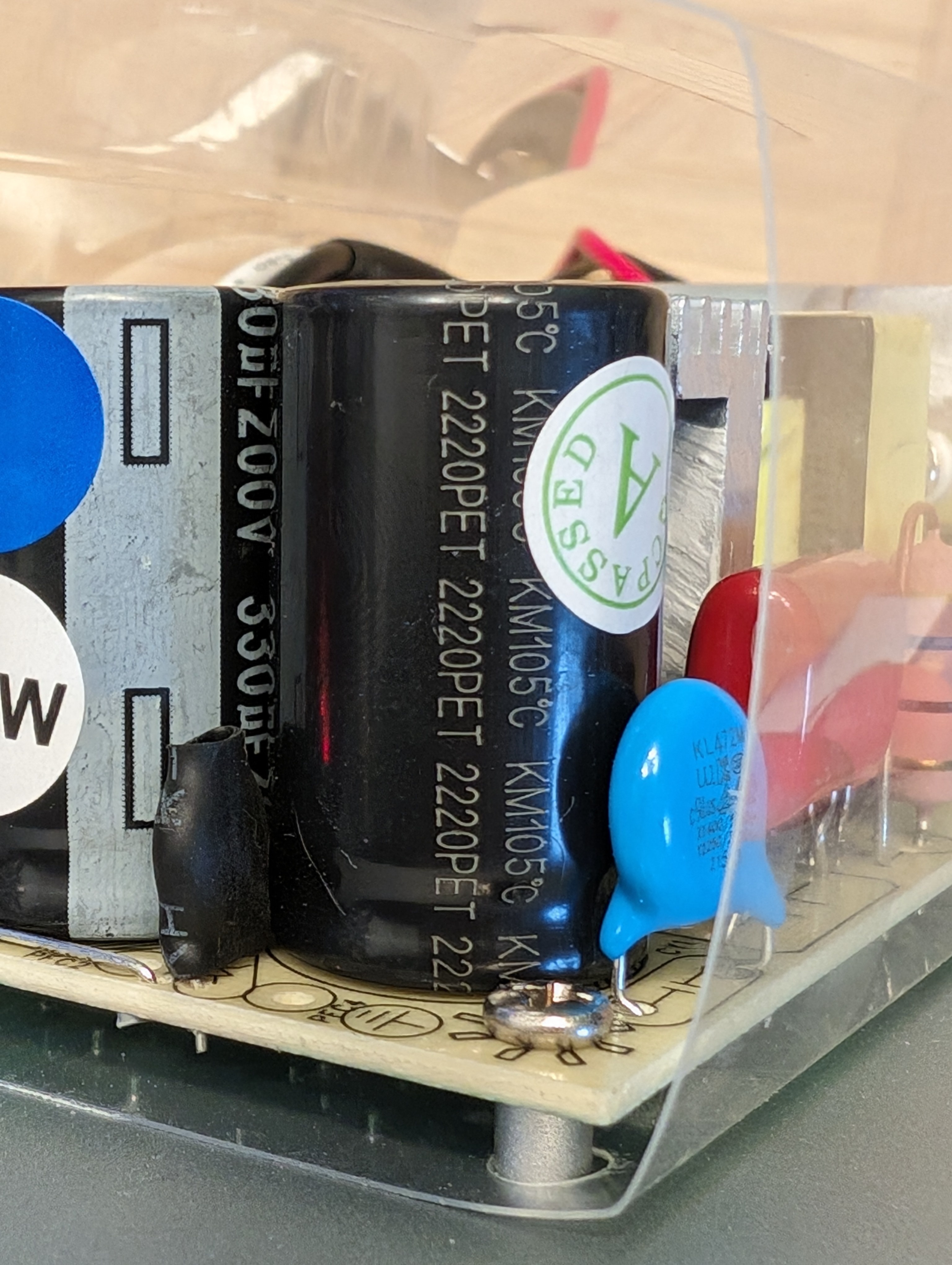

Here's a visual inspiration:

Yes, yes I would use ZFS if I had only one file on my disk.

OK, I think it may have to do with the odd number of data drives. If I create a raidz2 with 4 of the 5 disks, even with ashift=12, recordsize=128K, the performance in sequential single thread read is stellar. What's not clear is why this doesn't affect, or not as much, the 4x 8TB-drive raidz1.

Found the bit counter

I think the board has reached the end of the road. 😅