no , its made explicitly as an android application .

MinekPo1

tubular , which is a fork of newpipe , works for me . AFAIK its maintained by the person who used to maintain newpipe+sponsorblock

it seems like it , but I have no idea !

edit : to clarify , I believe it does , using the CC1 & CC2 pins which are also used for other things , but I don't know anything about USB protocol side , I should learn about it haha

more expensive imo.

actually the same pins (well one of them , though since the connector is rotationally symmetric you need two anyway) are used for USB Power Delivery and to negotiate what speed regime to operate in .

furthermore , USB On the go , which was introduced in USB 2.0 , offers the same functionality for USB Micro and USB Mini

actually they would be correct :

USB began as a protocol where one side (USB-A) takes the leading role and the other (USB-B) the following role . this was mandated by hardware with differently shaped plugs and ports . this made sense for the time as USB was ment to connect computers to peripherals .

however some devices don't fit this binary that well : one might want to connect their phone to their computer to pull data off it , but they also might want to connect a keyboard to it , with the small form factor not allowing for both a USB-A and USB-B port. the solution was USB On-The-Go : USB Mini-A/B/AB and USB Micro-A/B/AB connectors have an additional pin which allows both modes of operations

with USB-C , aside from adding more pins and making the connector rotationally symmetric , a very similar yet differently named feature was included , since USB-C - USB-C connections were planed for

so yeah USB-A to USB-A connections are explicitly not allowed , for a similar reason as you only see CEE 7 (fine , or the objectively worse NEMA) plugs on both ends of a cable only in joke made cables . USB-C has additional hardware to support both sides using USB-C which USB-A , neither in the original or 3.0 revision , has .

yeah thats the point , the cybertruck design is retrofuturism

Agh I made a mistake in my code:

if (recalc || numbers[i] != (hashstate[i] & 0xffffffff)) {

hashstate[i] = hasher.hash(((uint64_t)p << 32) | numbers[i]);

}

Since I decided to pack the hashes and previous number values into a single array and then forgot to actually properly format the values, the hash counts generated by my code were nonsense. Not sure why I did that honestly.

Also, my data analysis was trash, since even with the correct data, which as you noted is in a lineal correlation with n!, my reasoning suggests that its growing faster than it is.

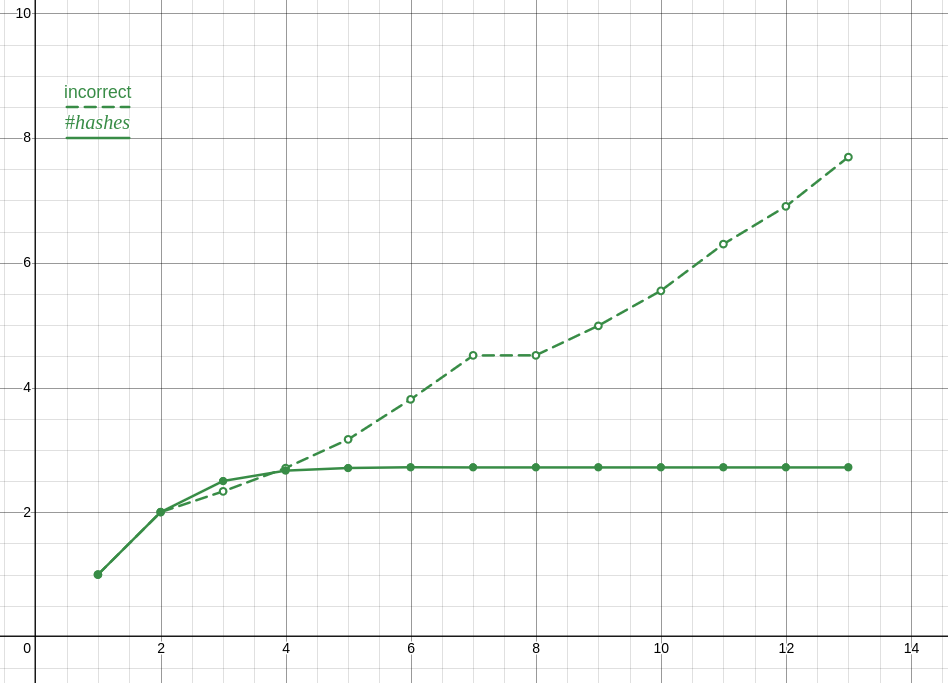

Here is a plot of the incorrect ratios compared to the correct ones, which is the proper analysis and also clearly shows something is wrong.

Anyway, and this is totally unrelated to me losing an internet argument and not coping well with that, I optimized my solution a lot and turns out its actually faster to only preform the check you are doing once or twice and narrow it down from there. The checks I'm doing are for the last two elements and the midpoint (though I tried moving that about with seemingly no effect ???) with the end check going to a branch without a loop. I'm not exactly sure why, despite the hour or two I spent profiling, though my guess is that it has something to do with caching?

Also FYI I compared performance with -O3 and after modifying your implementation to use sdbm and to actually use the previous hash instead of the previous value (plus misc changes, see patch).

you forgot about updating the hashes of items after items which were modified , so while it could be slightly faster than O((n!×n)²) , not by much as my data shows .

in other words , every time you update the first hash you also need to update all the hashes after it , etcetera

so the complexity is O(n×n + n×(n-1)×(n-1)+...+n!×1) , though I dont know how to simplify that

actually all of my effort was wasted since calculating the hamming distance between two lists of n hashes has a complexity of O(n) not O(1) agh

I realized this right after walking away from my devices from this to eat something :(

edit : you can calculate the hamming distance one element at a time just after rehashing that element so nevermind

honestly I was very suspicious that you could get away with only calling the hash function once per permutation , but I couldn't think how to prove one way or another.

so I implemented it, first in python for prototyping then in c++ for longer runs... well only half of it, ie iterating over permutations and computing the hash, but not doing anything with it. unfortunately my implementation is O(n²) anyway, unsure if there is a way to optimize it, whatever. code

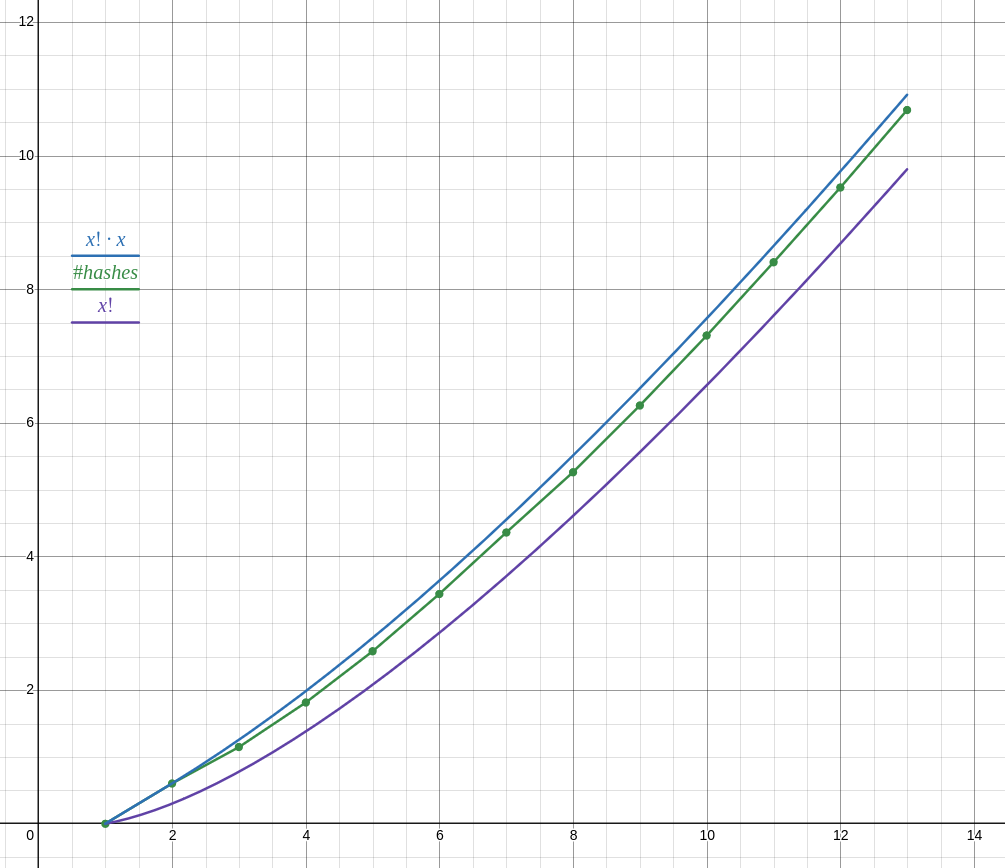

as of writing I have results for lists of n ∈ 1 .. 13 (13 took 18 minutes, 12 took about 1 minute, cant be bothered to run it for longer) and the number of hashes does not follow n! as your reasoning suggests, but closer to n! ⋅ n.

anyway with your proposed function it doesn't seem to be possible to achieve O(n!²) complexity

also dont be so negative about your own creation. you could write an entire paper about this problem imho and have a problem with your name on it. though I would rather not have to title a paper "complexity of the magic lobster party problem" so yeah

unless the problem space includes all possible functions f , function f must itself have a complexity of at least n to use every number from both lists , else we can ignore some elements of either of the lists , therby lowering the complexity below O(n!²)

if the problem space does include all possible functions f , I feel like it will still be faster complexity wise to find what elements the function is dependant on than to assume it depends on every element , therefore either the problem cannot be solved in O(n!²) or it can be solved quicker

honestly while I agree that slightly longer keys wont be safe for long , but tbh I'm gonna sit a bit more on my 23-bit RSA keys before migrating