This is the free healthcare Americans will be allowed to have. Can't wait for a hallucinating AI to diagnose me with being a dog or something🙄

Technology

This is the official technology community of Lemmy.ml for all news related to creation and use of technology, and to facilitate civil, meaningful discussion around it.

Ask in DM before posting product reviews or ads. All such posts otherwise are subject to removal.

Rules:

1: All Lemmy rules apply

2: Do not post low effort posts

3: NEVER post naziped*gore stuff

4: Always post article URLs or their archived version URLs as sources, NOT screenshots. Help the blind users.

5: personal rants of Big Tech CEOs like Elon Musk are unwelcome (does not include posts about their companies affecting wide range of people)

6: no advertisement posts unless verified as legitimate and non-exploitative/non-consumerist

7: crypto related posts, unless essential, are disallowed

Wait but I already have that diagnosis..?

I got that dawg in me

pubpy...

Can we roll back to "big data"? That felt promising because the implication was a human would be guiding it and interpreting it.

I only skimmed the article, do these results rely on the patient giving informative answers that are easily parsed by the AI?

For example "my arm hurts" might give a different diagnosis than "my shoulder and upper biceps are swolen after my workout an hour ago"

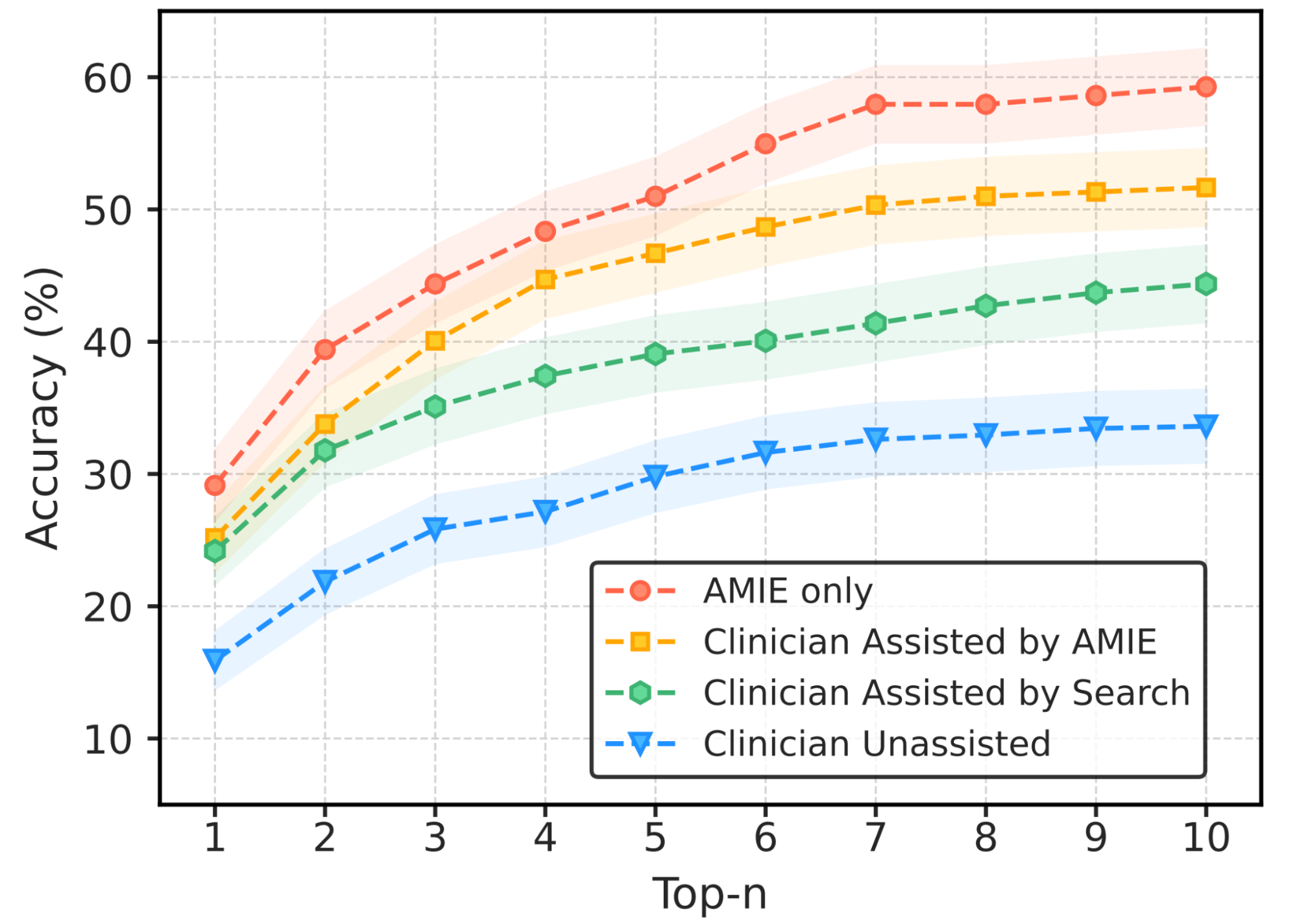

So there were two different configurations the model was evaluated against.

One was where they simulated patients and had them interact in an LLM like environment. In this one the model and real physicians were evaluated using an evaluation method called OSCE.

The other was having the model and physicians diagnosis old cases pulled from journals.

While the models arguably perform better in these environments, I don't think anyone would consider these real world situations/environments. It seems closer to "LLMs being able to pass the bar" than "LLMs have been able to pratcie law", as we've seen the former, but have not seen the latter.

Additionally, Google will be on my "approach with caution" list for a while after the gemini fiasco - https://arstechnica.com/information-technology/2023/12/google-admits-it-fudged-a-gemini-ai-demo-video-which-critics-say-misled-viewers/