this post was submitted on 21 Oct 2024

399 points (96.5% liked)

Programmer Humor

32472 readers

667 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

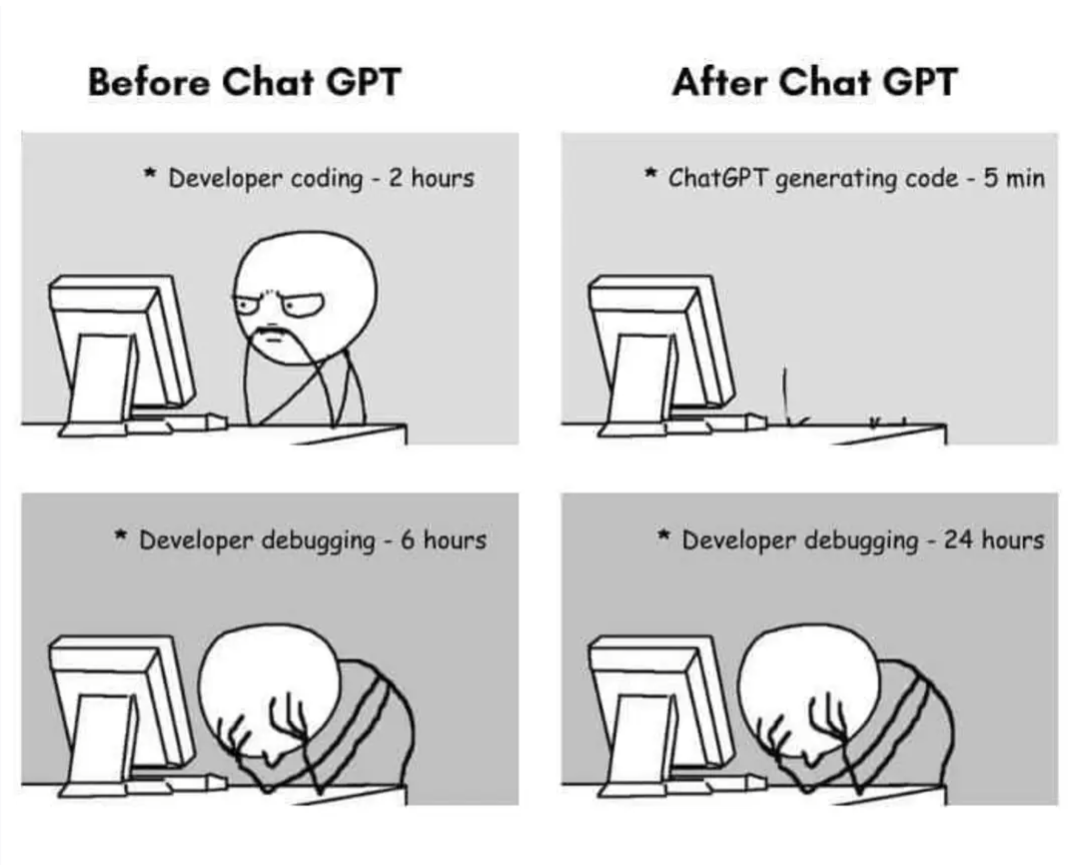

I haven't been in development for nearly 20 years now, but I assumed it worked like that:

You generate unit tests for a very specific function of rather limited magnitude, then you let AI generate the function. How could this work otherwise?

Bonus points if you let the AI divide your overall problem into smaller problems of manageable magnitudes. That wouldn't involve code generation as such...

Am I wrong with this approach?

I tend to write a comment of what I want to do, and have Copilot suggest the next 1-8 lines for me. I then check the code if it's correct and fix it if necessary.

For small tasks it's usually good enough, and I've already written a comment explaining what the code does. It can also be convenient to use it to explore an unknown library or functionality quickly.

"Unknown library" often means a rather small and sparely documented and used library tho, for me. Which means AI makes everything even worse by hallucinating.

I meant a library unknown to me specifically. I do encounter hallucinations every now and then but usually they're quickly fixable.

It's made me a little bit faster, sometimes. It's certainly not like a 50-100% increase or anything, maybe like a 5-10% at best?